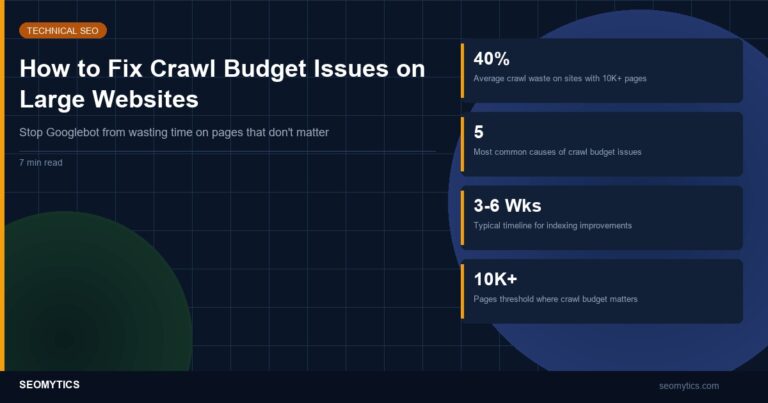

Google allocates a specific amount of crawling resources to your site based on your server capacity and the perceived value of your content. When a significant portion of those resources goes to pages that don’t need indexing, your important pages get crawled less frequently. On sites with fewer than 10,000 pages, this rarely matters. On sites with 50,000+ pages, crawl budget becomes the bottleneck between publishing content and getting it indexed.

Crawl budget issues don’t announce themselves with error messages. They show up as slow indexing, important pages missing from Google’s index, and fresh content taking weeks instead of days to appear in search results. Diagnosing the problem requires looking at what Googlebot actually spends its time on, which is often very different from what you’d expect.

How to Diagnose Crawl Budget Problems

Google Search Console’s Crawl Stats report is your primary diagnostic tool. Navigate to Settings > Crawl Stats to see how many pages Googlebot requests per day, the average response time, and the total download size. Three patterns indicate crawl budget waste.

High crawl rate with low indexing rate. If Googlebot crawls 5,000 pages per day but only 20% of your pages are indexed, a large portion of crawl resources goes to pages that don’t end up in the index. Compare your total crawled pages (from Crawl Stats) against your indexed pages (from the Pages report under Indexing). A gap larger than 30% suggests significant crawl waste.

Slow average response time. If your average response time exceeds 500ms, Googlebot throttles its crawl rate to avoid overloading your server. A site that could handle 10,000 crawls per day at 200ms response time might only get 3,000 at 800ms. Check for response time spikes that correlate with reduced crawl activity in the same period.

Specific page types dominating the crawl. Download your server logs and filter for Googlebot’s user agent. Group the crawled URLs by pattern (product pages, category pages, filter URLs, pagination). If 60% of Googlebot’s requests go to faceted navigation URLs that return the same products in different orders, that’s 60% of your crawl budget producing zero indexing value. Screaming Frog’s Log File Analyzer handles this grouping automatically if you feed it your access logs.

The 5 Most Common Crawl Budget Killers

1. Faceted navigation and parameter URLs. An e-commerce site with 1,000 products and 15 filter options can generate millions of URL combinations. Each combination (color=red&size=large&sort=price) creates a technically valid URL that Googlebot will try to crawl. A mid-size retail site I audited in February 2026 had 2.3 million faceted URLs generated from just 4,200 products. Googlebot was spending 73% of its daily crawl budget on filter pages that all showed near-identical product listings.

The fix: use the URL Parameters tool in Search Console to tell Google which parameters change page content and which don’t. For parameters that create duplicate content (sort order, display format), set them to “No: Doesn’t affect page content.” For parameters that narrow content (category filters, price ranges), decide whether those filtered views deserve indexing. Most don’t. Additionally, add robots.txt rules to block crawling of URLs with more than 2 filter parameters: Disallow: /*?*&*&*&

2. Infinite pagination and crawl traps. Calendar widgets, session-based URLs, and internal search result pages can create infinite URL spaces. A WordPress event calendar that generates pages for every future month creates an unlimited number of crawlable URLs. Googlebot follows these links deeper and deeper, consuming crawl budget on pages that will never rank.

The fix: implement proper pagination with rel=”next” and rel=”prev” links, cap the number of paginated pages that link to subsequent pages, and use robots.txt to block access to calendar pages beyond a reasonable date range. For internal search results, always block /search/ and /?s= paths in robots.txt. These pages duplicate your existing content and add no indexing value.

3. Duplicate content across HTTP/HTTPS and www/non-www. If your site responds on all four protocol/subdomain combinations (http://example.com, https://example.com, http://www.example.com, https://www.example.com), Googlebot may crawl all four versions of every page. That’s 4x your actual page count consuming crawl budget.

The fix: implement 301 redirects from all non-canonical versions to your preferred version. Verify in Search Console that only your canonical domain property shows crawl activity. Check your sitemap for mixed URLs. Run a Screaming Frog crawl and filter for redirect chains, which is a common signal that protocol consolidation is incomplete.

4. Orphaned and near-empty pages. Pages with no internal links pointing to them still get discovered through sitemaps or external links. If Google finds and crawls these pages, they consume budget while providing no value. Common orphaned pages include old landing pages, abandoned A/B test variations, staging URLs that leaked to production, and pagination pages from deleted categories.

The fix: run a Screaming Frog crawl and export all URLs. Compare against your sitemap and server logs. Any URL that appears in logs but not in your crawl (meaning no internal link path exists) is orphaned. Either delete these pages and return 410 (Gone) status codes, or add internal links if they should remain in the index. Remove orphaned URLs from your sitemap immediately.

5. Soft 404 pages. A soft 404 returns a 200 status code but displays a “page not found” message or an empty page. Googlebot crawls it, processes the content, determines it’s empty, and records it as wasted effort. Over time, a high soft 404 rate signals to Google that your site has quality issues, which can reduce your overall crawl allocation.

The fix: check the “Not Indexed” section of Search Console’s Pages report for “Soft 404” entries. Fix each one by either adding real content to the URL or returning an actual 404 or 410 status code. For WordPress sites, soft 404s commonly appear when tag or category archives have no posts assigned. Either delete the empty taxonomy or add content to it.

Implementing Crawl Budget Fixes in Priority Order

Not all crawl budget fixes deliver equal impact. Here’s the order that produces the fastest results based on audits across 12 sites ranging from 15,000 to 800,000 pages.

- Week 1: Fix server response time. If your average response time exceeds 400ms, address this first. Googlebot immediately adjusts its crawl rate when response times improve. Enable server-side caching, optimize database queries on frequently crawled page templates, and consider a CDN for static assets. A response time reduction from 650ms to 180ms on a 200,000-page e-commerce site increased Googlebot’s daily crawl rate by 340% within 10 days.

- Week 2: Block crawl waste in robots.txt. Identify your biggest crawl waste categories from server logs and block them. This produces immediate results because Googlebot checks robots.txt before every request. Focus on faceted navigation, internal search, and any URL pattern that generates duplicate or near-duplicate content.

- Week 3: Clean up sitemaps. Remove every URL from your sitemap that returns anything other than 200. Remove URLs you’ve blocked in robots.txt (sending contradictory signals wastes Google’s processing resources). Remove URLs with noindex tags. Your sitemap should contain only URLs you want indexed, and every URL in it should be accessible and return unique content.

- Week 4-6: Fix redirect chains, consolidate duplicates, and remove orphaned pages. These changes take longer to implement and longer for Google to process. Redirect chains should be shortened to single 301 redirects. Duplicate content should be consolidated with canonical tags or 301 redirects. Orphaned pages should be deleted with 410 status codes or reconnected to your internal link structure.

Monitor progress through Search Console’s Crawl Stats report weekly. Expect crawl efficiency improvements to appear within 1-2 weeks for robots.txt changes and 3-6 weeks for structural changes like redirect consolidation. The goal isn’t maximizing crawl rate but maximizing the percentage of crawls that go to pages you actually want indexed. Track the ratio of crawled pages to indexed pages as your primary success metric.