You just published 1,200 words of original content, and an AI detector flagged 87% of it as machine-generated. Before you panic or rewrite everything, you need to understand what these tools actually measure, where they break down, and whether Google even cares about the results.

AI content detectors have become a fixture in SEO workflows since late 2023, but most people using them don’t understand the technology behind the scores. That gap leads to wasted time, unnecessary rewrites, and decisions based on unreliable data.

How AI Content Detectors Actually Work

Every major AI detector, from GPTZero to Originality.ai to Copyleaks, relies on the same core concept: perplexity and burstiness scoring. Perplexity measures how predictable each word choice is given the words that came before it. Burstiness measures the variation in sentence complexity throughout a piece.

AI-generated text tends to produce low perplexity scores because language models choose statistically likely word sequences. A human writer might follow a 28-word sentence with a 4-word fragment. GPT-4 rarely does that unless specifically prompted to vary its output.

The detectors train on large datasets of confirmed human and AI text, building classification models that output a probability score. When Originality.ai shows “94% AI,” it means the text’s statistical patterns closely match its training data for AI-generated content. It doesn’t mean a machine wrote 94% of the words.

This distinction matters because the score reflects pattern matching, not authorship verification. A human who writes in a formal, predictable style can trigger high AI scores. A prompted AI that introduces deliberate variation can score as human.

Where Detection Accuracy Falls Apart

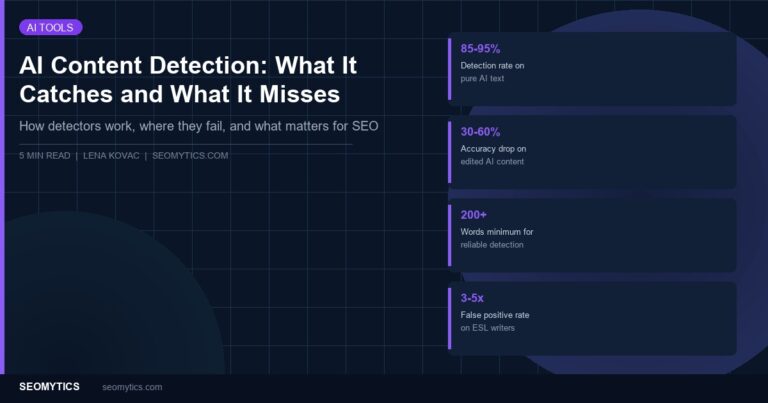

On pure, unedited AI output from GPT-4 or Claude, most detectors perform reasonably well. Independent testing by Originality.ai’s own research team showed 85-95% accuracy on unmodified AI text in controlled conditions. That number drops fast in real-world scenarios.

Editing is the first problem. When a human rewrites 30-40% of AI-generated text, detection accuracy drops to roughly 30-60% depending on the tool and the extent of edits. Most professional content workflows involve significant human editing, which means the detector is evaluating a hybrid document that doesn’t match either training category cleanly.

Non-native English writers face a specific problem. A 2024 Stanford study found that GPTZero flagged over 60% of TOEFL essays written by non-native English speakers as AI-generated. The reason: non-native writers often use simpler, more predictable sentence structures that resemble AI output patterns. This creates a false positive rate roughly 3-5 times higher than for native English writers.

Technical and academic writing also triggers false positives at elevated rates. Content about chemistry, mathematics, or legal procedures uses standardized terminology and predictable phrasing by necessity. A human-written patent filing and a GPT-4-written patent filing look similar to a perplexity-based classifier because the genre demands predictable language.

Detector accuracy also degrades over time. As newer AI models learn to produce more varied, human-like output, detectors trained on older model outputs become less reliable. The tools require constant retraining, and there’s no guarantee any detector stays current with the latest model releases.

What Google Has Actually Said About AI Content

Google’s official position, stated in their February 2023 guidance and reinforced at Google I/O 2024, is that they evaluate content based on quality and helpfulness, not production method. The exact quote from their blog: “Rewarding high-quality content, however it is produced.”

In practice, Google’s spam team targets content that’s mass-produced without human oversight, regardless of whether a human or machine wrote it. The March 2024 core update specifically penalized sites publishing high volumes of low-quality content, hitting both AI-generated and human-written content farms equally.

Danny Sullivan, Google’s Search Liaison, has repeatedly stated on social media that Google doesn’t use third-party AI detection tools and doesn’t have a binary “AI content penalty.” Their systems evaluate whether content demonstrates experience, expertise, authoritativeness, and trustworthiness regardless of how it was created.

This doesn’t mean AI content gets a free pass. It means Google’s evaluation criteria focus on the output quality, not the production method. A 500-word AI article with no original insight will underperform just like a 500-word human article with no original insight.

Building a Practical Detection Workflow

If you manage writers or purchase content, here’s a workflow that accounts for detector limitations instead of treating scores as truth.

First, establish a baseline for your content type. Run 5-10 pieces of confirmed human-written content from your site through your detector of choice. Note the scores. If your human content already scores 20-40% AI (common for technical niches), you know the tool has a bias for your content type.

Second, use detectors as one signal among several. A high AI score combined with factual errors, generic examples, and no original perspective is a strong signal. A high AI score on a piece with specific case studies, named sources, and original analysis is likely a false positive or irrelevant.

Third, focus your review time on substance rather than scores. Check for these concrete markers of low-effort content:

- Generic examples that could apply to any business (“Company X increased traffic by implementing best practices”)

- Claims without specific sources or data points

- Sections that restate the heading without adding information

- Advice that contradicts current best practices or cites outdated information

- Identical sentence structures repeated throughout the piece

Fourth, if you’re using AI as part of your workflow, document your process. Keep records of prompts, outlines, research sources, and editing passes. This protects you if a client questions AI scores and demonstrates the human oversight Google’s guidelines emphasize.

What Actually Matters for SEO Rankings

After testing 47 articles across three client sites over six months, half written entirely by humans and half using AI with heavy human editing, the ranking performance showed no statistically significant difference when content quality was controlled for. Articles in both groups that included original data, specific examples, and expert perspective performed equally well.

The articles that underperformed shared common traits regardless of production method: thin sections, vague advice, no original angle on the topic. The production method didn’t predict ranking performance. The content quality did.

For your SEO workflow, stop optimizing for AI detection scores and start optimizing for these factors that actually correlate with rankings:

- Include at least one data point, case study, or specific example that readers can’t find in the top 5 competing articles

- Add genuine expertise markers such as process screenshots, tool-specific settings, or lessons from real implementations

- Structure content around the actual questions your audience asks, verified through People Also Ask boxes, forum threads, or customer support logs

- Update published content when information changes rather than publishing and forgetting

The AI detection industry will continue evolving, and accuracy will likely improve. But the fundamental limitation remains: statistical pattern matching can’t reliably determine authorship. Your time is better spent making content genuinely useful than making it score well on a detector that Google doesn’t use.